Read Data Like a Skeptic

Data can be messy, subjective, and even manipulated, which is exactly why data acumen matters.

We see numbers all the time at work. We track progress, allocate resources, consume research, and pitch ideas. But how well do we actually understand what we’re looking at?

If you’re making decisions or supporting the people who do, you are accountable for the number and for understanding what is behind it.

Today we’re talking about how to critically shine a light on data you use to make decisions at work. We’ll cover:

Why to revisit what you think you know about your data

How to determine data’s strengths and limits by looking at its source

Uncovering hidden assumptions

Balancing the decision against the risk, ambiguity, and bias in the data

This isn’t about becoming a data analyst. It’s about strengthening the skills to size up data and then use it to confidently choose a path forward.

Get to know your data

Most misunderstandings begin here. We too often believe that we are clear on familiar metrics…until someone asks us to explain them. That’s when we realize the gaps.

When we don’t fully understand what the data represents, we draw incorrect conclusions. Everyday sources of confusion include:

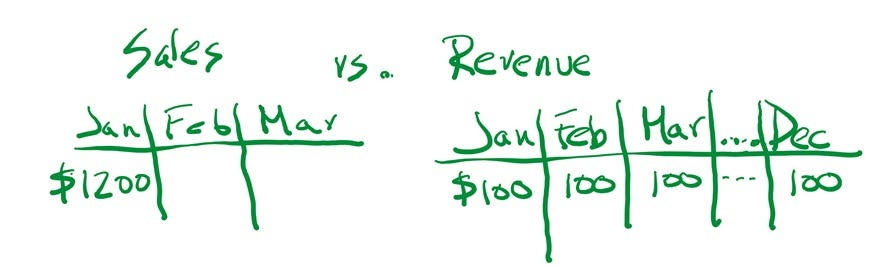

Familiar terms that are used loosely. Customers and users. Sales and revenue. These sound interchangeable, but aren’t, and the data can show up differently. For example, let’s say you sell a $1200 annual subscription. Sales will show up the month the sale closes, but the business recognizes revenue evenly over the duration of the subscription.

One label, many definitions. Metrics like “traffic” sound intuitive, but have many meanings. “Traffic” can mean page views, sessions, visitors, or unique visitors.

Missing context. A percentage or rate change sounds clear until you ask: from what to what? Statements like: “Lead conversion rates have grown 10%!” have little meaning until clarified into: “Last year, the internal sales team converted 10 out of every 100 website leads into a sale. This year, they are converting 11 out of every 100.”

Vague or inconsistent classifications. A report documents “adverse reactions” for a new drug. But what does that mean? A mild rash or hospitalization? Definitions and classifications vary by industry or even by department in the same organization.

Even if a term seems obvious, confirm that what you think the data represents is accurate. Clarify and define, don’t assume.

The source shapes your interpretation

Where the data came from reveals its strengths and its limits. This context will determine how much trust you put into a number’s accuracy and reliability.

For EXTERNAL data sources, ask:

Who published the data? Established, credible sources - like census data or a reputable research firm - are generally more reliable, especially when the organization specializes in data and has a verifiable track record. In contrast, vendors or lobbyists may provide research, but they also have incentives that could bias how results are framed or interpreted.

Where do they get their data? How did they collect it? Does the data adequately represent the population being measured? What is the sample size? Strong data sources explain how they collected their data and acknowledge limitations.

Is the methodology available? How information is collected, defined, and calculated determines whether it is actually relevant to your situation. Credible sources will usually explain how they collect and analyze their data and what limitations apply. If someone dodges your questions, be cautious.

For INTERNAL data, ask:

What is the data source? Some systems cover only part of the business when you are trying to understand the whole. Others may have known quality issues when you need greater accuracy.

Is it a recurring report or a one-off request? Ad-hoc reports require more scrutiny. Regular reports have usually been stress-tested and refined over time.

Who built the report? Different departments bring different lenses. Finance may be more conservative than Sales. Marketing may define ‘customers’ differently than Operations. Each team has different data access, expertise, and perspectives. These factors influence what gets measured and how it gets presented.

No data is perfect, but solid data can withstand scrutiny.

Uncover assumptions behind the data

Every number is built on choices. Some choices are clear (like a time frame), but many are invisible unless you ask. Let’s start with a few that cause the most trouble:

The sample population. Don’t assume a data point represents the full picture. If a survey on fried chicken sandwiches is conducted with 1,000 college students, it doesn’t represent national preferences - it represents college student preferences. So always ask: Who’s actually included in this data? And who’s missing?

Projections and estimates. These are built on assumptions, and you may not agree with the approach nor the level of risk represented in those choices. For example, a revenue forecast will include assumptions like: average sales price, expected growth or decline, revenue from new clients vs. existing clients or new products vs. existing products. Don’t hesitate to have these discussions. They are usually an invaluable step for refining estimates and projections.

Check the math. You don’t need to audit everything, but a quick spot check often catches errors before you get too far. A 2025 Canva survey reported that 57% of respondents said they make spreadsheet errors that impact their work. With numbers like that, it’s not worth just assuming the math is right.

Data skeptics remember to probe on these areas because they’ve learned (often the hard way) how easy it is to act on a false assumption.

“Good enough” is a real answer

You won’t always have perfect information. The question is whether it’s good enough for the decision you are making.

Match the scrutiny to the stakes. Low-stakes decisions, like an A/B test tweak, may not require bulletproof data. High-impact decisions, like a large investment or launching in a new country, warrant more scrutiny.

Watch for false precision. Specific numbers can signal more certainty than actually exists. For example: “40.2% of the population will go on vacation this summer, up from 39.8% last summer.” But if these are based on two consumer surveys of 100 people each, that extra decimal is indicating a level of certainty that does not exist.

Treat surprises as signals. If something looks off - up, down, or just unexpected - pause. Surprises are your cue to investigate. It might be a real shift. It might be a data issue. Either way, it’s worth understanding.

Ranges can be good enough too. If you have a small client base, it could be good enough to use a small range when analyzing annual client survey data. “Approximately 3%-5% of clients plan to increase their marketing budgets, which is about the same as last year.” Being directionally right is sometimes enough, but you need to know that going in.

Spot the bias

Information is produced and interpreted by people. Everyone has a lens, and sometimes an agenda. This doesn’t make their data wrong, but it does mean you should consider that bias in how you apply that information.

Motivation matters. Incentives shape how data is framed. That doesn’t make it wrong, but it does affect how it’s presented. A pharmaceutical company wants positive results for a new drug or product. A salesperson’s forecasts are influenced by how they are compensated. From students to policymakers, people interpret data through their lens. Know the agenda, and factor it in.

Over-generalizing. When data is missing or hard to find, it’s tempting to apply one piece of information to perceived similar situations. But what is true for one group, market, or channel may not apply more broadly. Instagram users do not necessarily represent all social media users. New York City restaurant trends don’t predict what’s hot in Texas, and the top-selling toys in the U.S. won’t match those in France.

History doesn’t always predict the future. Past patterns can break. A competitor has a breakthrough moment. There’s a technology shift, a viral moment, or a market trend that quietly hits a tipping point. ‘It’s always been this way” is an assumption, not a fact.

Why data skepticism matters

Data is never just numbers. It’s a set of choices about what to measure, how to frame it, and who it represents. Your job isn’t to audit every figure. It’s to stay curious enough to ask the right questions, and confident enough to push back when something doesn’t add up.

Additional Reading

Here are two excellent resources from fellow Substack writers if you want to go further on assessing external resources:

Hana Lee Goldin, MLIS, author of Card Catalog, recently wrote a guide to evaluating information sources in the AI age.

Dr Sam Illingworth, author of Slow AI, wrote about how to spot a fabricated source and created a game, Dead Reference, to test your skills.

More related articles from We Dig Data: